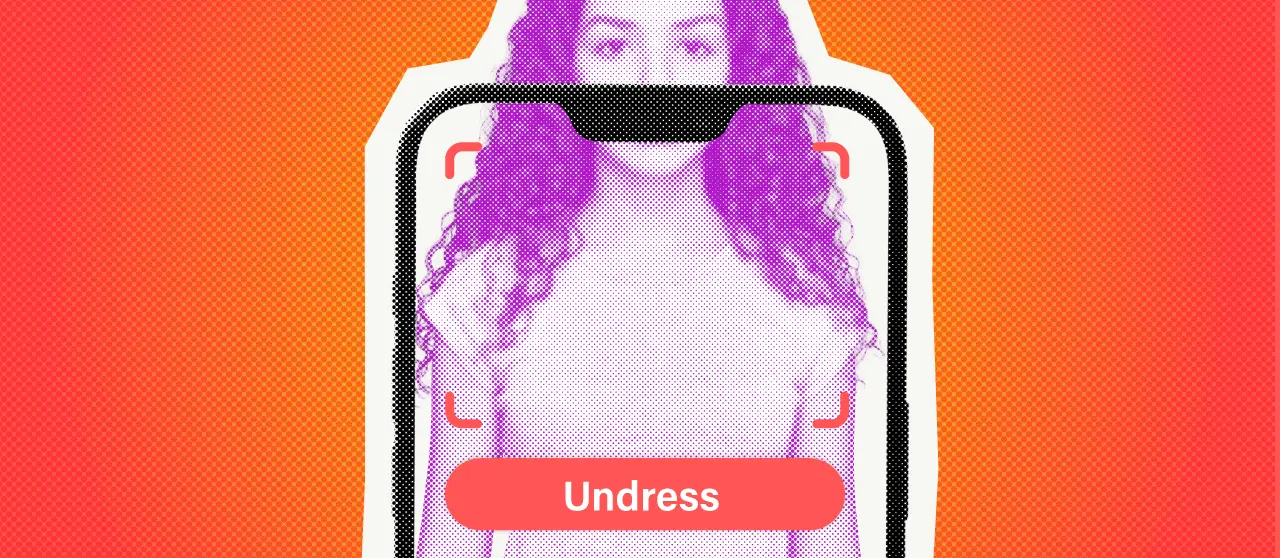

New Delhi: A new investigation has raised fresh concerns over the effectiveness of content moderation systems on major app marketplaces, revealing that AI-powered “nudify” applications—capable of generating non-consensual intimate images—continue to remain accessible on both Apple’s App Store and Google Play Store despite being officially prohibited under platform policies.

Banned by Policy, But Still Just a Search Away

The findings, highlighted in a report by the Tech Transparency Project (TTP), suggest a widening gap between stated rules and real-world enforcement. According to the report, users searching for terms such as “nudify,” “undress,” and “deepnude” are still being surfaced multiple applications capable of digitally altering images to create sexually explicit or semi-nude outputs.

The investigation found that nearly 40% of the apps appearing in such search results across both platforms included features that could be used to generate manipulated or explicit imagery. In total, 46 apps were identified in Apple App Store search results, of which at least 18 reportedly offered nudifying functions. On Google Play, 49 apps surfaced under similar queries, including around 20 with comparable capabilities.

What has further intensified concern is the scale of reach these apps have achieved. The report estimates that nudify-related applications have collectively been downloaded approximately 483 million times and generated more than $122 million in global revenue, indicating widespread availability despite formal restrictions.

FCRF Returns With CDPO, Its Premier Data Protection Certification for Privacy Professionals

Big Tech’s Enforcement Gap Exposed

A major red flag identified in the investigation is the accessibility of many of these apps to younger users. Several were reportedly categorized under “Everyone” ratings, meaning they could potentially be downloaded by minors. This has triggered renewed debate around the effectiveness of age-rating systems and parental controls on digital distribution platforms.

Both Apple and Google maintain strict guidelines prohibiting sexually explicit, pornographic, or exploitative content. However, enforcement appears inconsistent in practice. Google allows limited exceptions for nudity when it is considered educational, artistic, or scientific in nature—an allowance critics argue can be exploited by developers to bypass restrictions.

The report also flagged instances where advertisements promoting such applications appeared within search results, raising additional concerns about the role of automated ad systems in amplifying exposure to sensitive content. This has added pressure on platform operators to tighten both search algorithms and advertising review mechanisms.

This Is No Longer Just an App Store Problem

In response to the findings, both tech giants have reportedly taken partial corrective measures. Google stated that several of the flagged applications had already been suspended and that further investigation and enforcement actions were ongoing. Apple has also removed a number of apps identified in the report, although critics argue that such removals often occur only after external exposure rather than through proactive monitoring systems.

Beyond standalone apps, the issue is increasingly expanding into broader artificial intelligence ecosystems. The report highlights that general-purpose AI chatbots and image generation tools are also being misused to produce similar manipulated content when prompted, raising concerns that the problem is no longer limited to niche applications but is becoming embedded in mainstream AI tools.

Child Safety Fears Grow Loud

Experts warn that this reflects a growing enforcement challenge for global technology companies. While policies exist on paper, rapid app deployment cycles, frequent rebranding by developers, and evolving AI capabilities make it difficult for moderation systems to keep pace with misuse.

Child safety advocates have also expressed alarm, noting that the availability of such applications could increase risks of harassment, exploitation, and non-consensual image creation, particularly among teenagers and young internet users who may unknowingly engage with these tools.

The report ultimately highlights a broader question facing Big Tech platforms: whether existing moderation frameworks are robust enough to manage the rapid spread of AI-driven misuse, or whether a fundamental redesign of enforcement systems is required.

As global regulators increasingly scrutinize AI-related harm and digital safety standards, Apple and Google are expected to face heightened pressure to strengthen oversight, improve detection systems, and ensure that harmful applications do not continue to circulate within their ecosystems at scale.