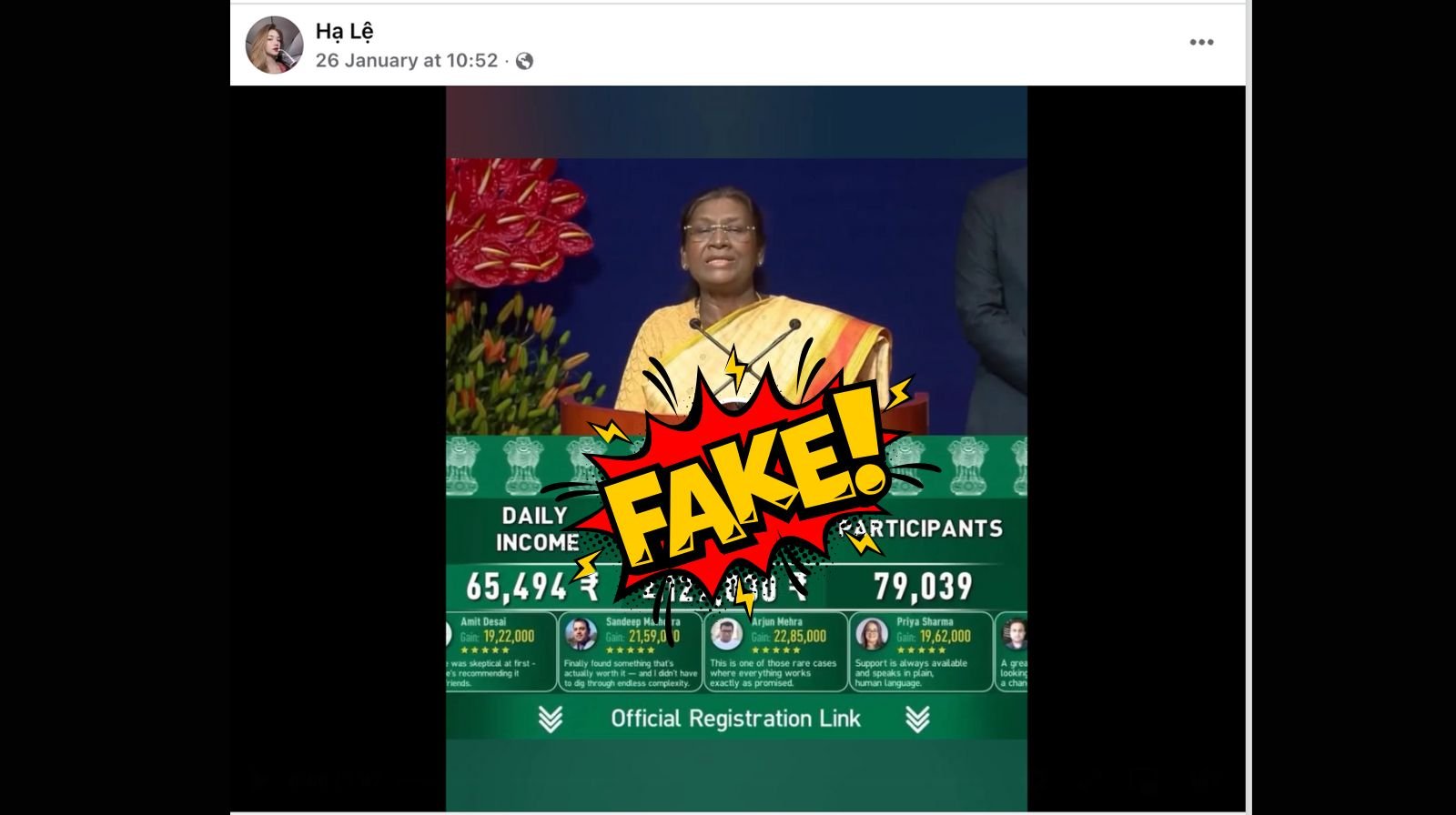

New Delhi: Meta is under growing scrutiny after it declined to take down a deepfake investment advertisement impersonating President Droupadi Murmu, despite the content being formally reported by a senior Indian Police Service officer from Andhra Pradesh Police.

The issue was publicly flagged by Dr Fakkeerappa Kaginelli, who shared details of the incident in a LinkedIn post, raising serious concerns about the social media giant’s content moderation standards, response timelines, and priorities—particularly when national dignity and public safety are at stake.

Two Minutes to Dismiss a Serious Threat

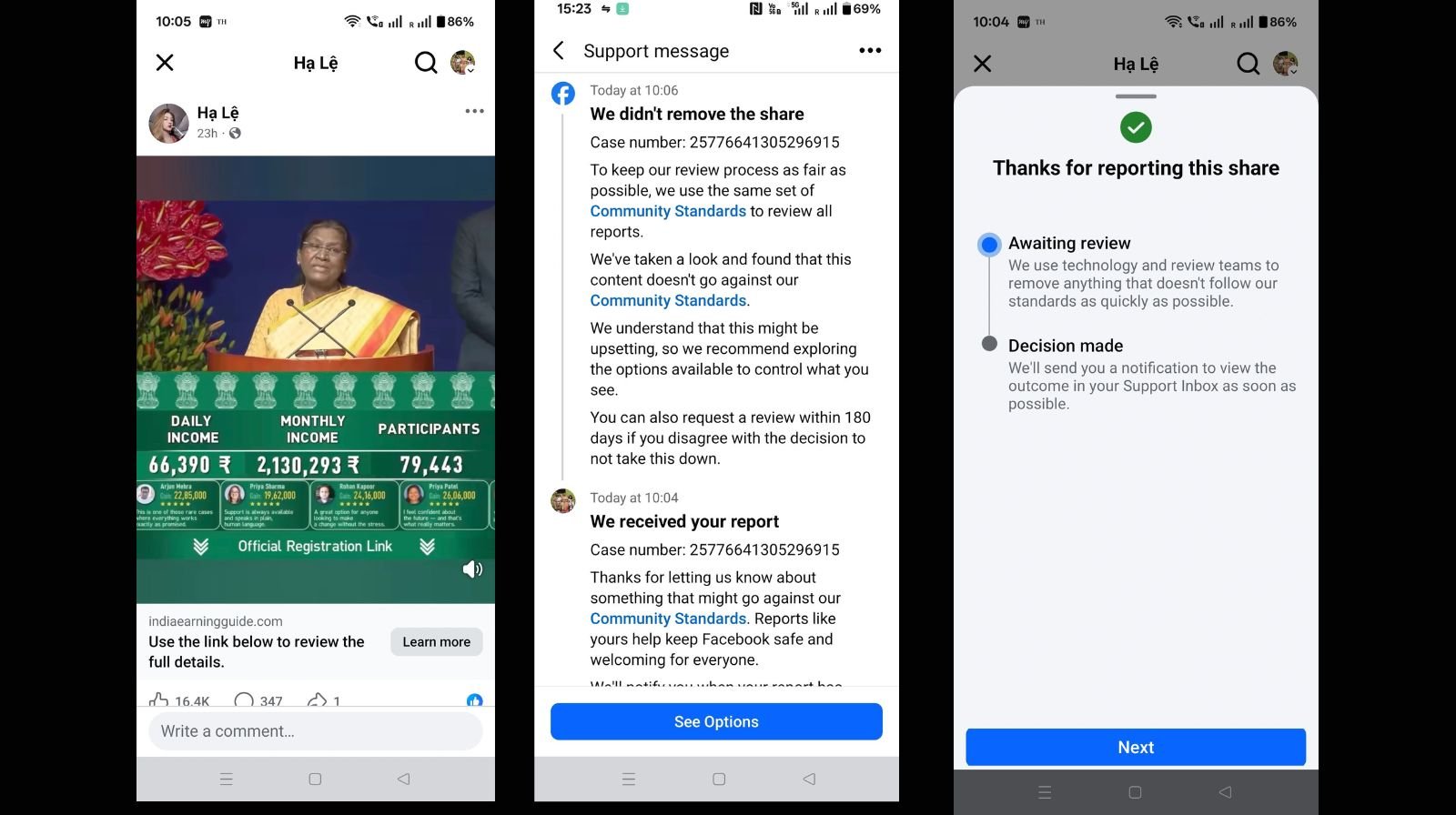

According to Dr Kaginelli, the misleading Facebook advertisement—featuring an AI-generated fake video of the President promoting a fraudulent investment scheme—was reported to Meta at 10:04 AM. By 10:06 AM, Meta responded that the content would not be removed, stating it did not violate the platform’s Community Standards.

Certified Cyber Crime Investigator Course Launched by Centre for Police Technology

Screenshots of Meta’s support response shared by the officer show the company concluding its review almost instantly, advising the reporter to manage their content preferences rather than addressing the alleged impersonation.

“When fake AI content impersonating the highest constitutional authority of the country fails to meet the threshold for action, it raises serious concerns about the effectiveness—and intent—of these standards,” Dr Kaginelli wrote.

Republic Day Timing Raises Red Flags

The fake video was strategically posted on Republic Day, a day when public attention around the President is at its peak. Cybercrime investigators note that scammers often exploit national events, trending names, and moments of heightened trust to maximise reach and credibility.

At the time of reporting, the video had already amassed over 20,000 likes and more than 400 comments, indicating widespread circulation before any intervention by the platform.

In the manipulated clip, the President is falsely shown speaking about a lucrative investment opportunity—an established fraud pattern increasingly powered by generative AI tools.

Deepfakes, Profit, and Platform Responsibility

Dr Kaginelli’s post struck a broader chord, questioning whether commercial incentives are outweighing civic responsibility.

“When profit comes before public safety and national dignity, such outcomes become inevitable,” he noted.

The incident highlights a growing challenge for social media platforms globally: AI-driven impersonation scams that can be created cheaply, deployed rapidly, and scaled through paid advertisements—often faster than moderation systems can respond.

Not an Isolated Case

Law enforcement officials and cybersecurity experts say this case reflects a wider pattern of delayed or inadequate action by social media platforms, even when deceptive content is flagged by verified users, researchers, or police officers.

In recent months, multiple fake ads impersonating politicians, business leaders, and public institutions have surfaced, many of them remaining live for hours or days—long enough to cause financial harm to unsuspecting users.

Despite repeated assurances from Meta about investments in AI detection and human review teams, the response in this case—delivered within two minutes and rejecting takedown—has intensified concerns about over-reliance on automated systems.

Legal and Governance Implications

Impersonating the President of India for financial fraud potentially attracts provisions under Indian laws related to cheating, identity theft, and misuse of electronic communication. Legal experts argue that platforms hosting such content could face greater regulatory scrutiny if they fail to act swiftly after being notified.

The episode also feeds into the larger policy debate on platform accountability in the age of generative AI, where deepfakes pose risks not just to individuals, but to democratic institutions and public trust.

Calls for Stronger Safeguards

Cyber law specialists and former investigators say incidents like this underline the urgent need for:

- Immediate takedown protocols for impersonation of constitutional authorities

- Priority escalation channels for law enforcement-flagged content

- Human-led review for high-risk AI-generated material

- Greater transparency around why certain content is deemed compliant

As deepfake-enabled fraud accelerates, experts warn that slow or dismissive moderation responses could have far-reaching consequences.

Meta has not issued a public response to this specific incident at the time of publication.