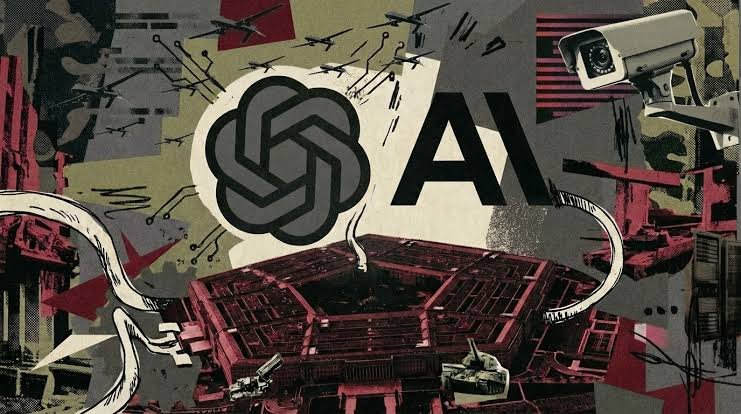

Washington: The use of artificial intelligence in warfare has triggered fresh unrest within the global tech community. Following recent U.S. strikes on Iran, nearly 900 employees from Google and OpenAI have jointly urged their companies to ensure that AI technologies are not deployed for military surveillance or lethal autonomous weapons.

The employees issued a collective letter expressing concern that the U.S. Department of Defense, commonly known as the United States Department of Defense, is pressuring technology firms to align with defense objectives. The move follows reports that AI company Anthropic was blacklisted after it reportedly refused to allow its AI systems to be used for intelligence gathering or autonomous weapons applications.

FCRF Launches Flagship Certified Fraud Investigator (CFI) Program

Renewed Tensions for Google

For Google, this controversy echoes past internal resistance. In 2018, thousands of employees protested the company’s involvement in “Project Maven,” a Pentagon drone program, ultimately forcing the company to step back from the contract.

Now, reports suggest that Google has been in discussions about integrating its advanced AI model, Gemini, into defense intelligence systems. The situation has reignited internal debate, particularly after the company quietly revised its AI principles last year and removed explicit language prohibiting weapon-related applications.

Google’s Chief Scientist Jeff Dean has previously acknowledged concerns that AI-driven mass surveillance could undermine freedom of expression. However, the company has yet to issue a detailed public clarification regarding its current defense engagements.

Industry-Wide Support

The protest is not limited to Google and OpenAI. Employees from major firms including Salesforce, Databricks, IBM, and Cursor have also voiced support for Anthropic. The joint letter calls on authorities to reverse the decision labeling Anthropic a “supply chain risk.”

Signatories have further appealed to the U.S. Congress to examine whether extraordinary powers are being used appropriately against American technology companies.

Activist Groups Join the Debate

Advocacy group “No Tech For Apartheid” has urged cloud giants such as Google, Amazon, and Microsoft to reject defense contracts that could enable mass surveillance or misuse of AI systems. The group warned that deploying advanced AI models in classified military environments may carry significant ethical risks.

Comparisons have also been drawn between potential deployment of Google’s Gemini model and the defense-sector access reportedly granted to xAI’s Grok system. While Anthropic and OpenAI have publicly outlined safeguards and limits in their agreements, Google has not yet provided similar transparency.

Growing Ethical Questions Around AI

As AI systems become increasingly powerful and capable, questions about their boundaries and governance are intensifying. Critics argue that without clear restrictions, AI technologies could reshape modern warfare in unpredictable and potentially dangerous ways.

The latest pushback from employees signals a broader debate within Silicon Valley: whether cutting-edge AI innovation should serve national defense priorities or remain strictly confined to civilian and commercial use.

With geopolitical tensions rising and AI development accelerating, the clash between ethical responsibility and strategic interests is likely to deepen in the months ahead.