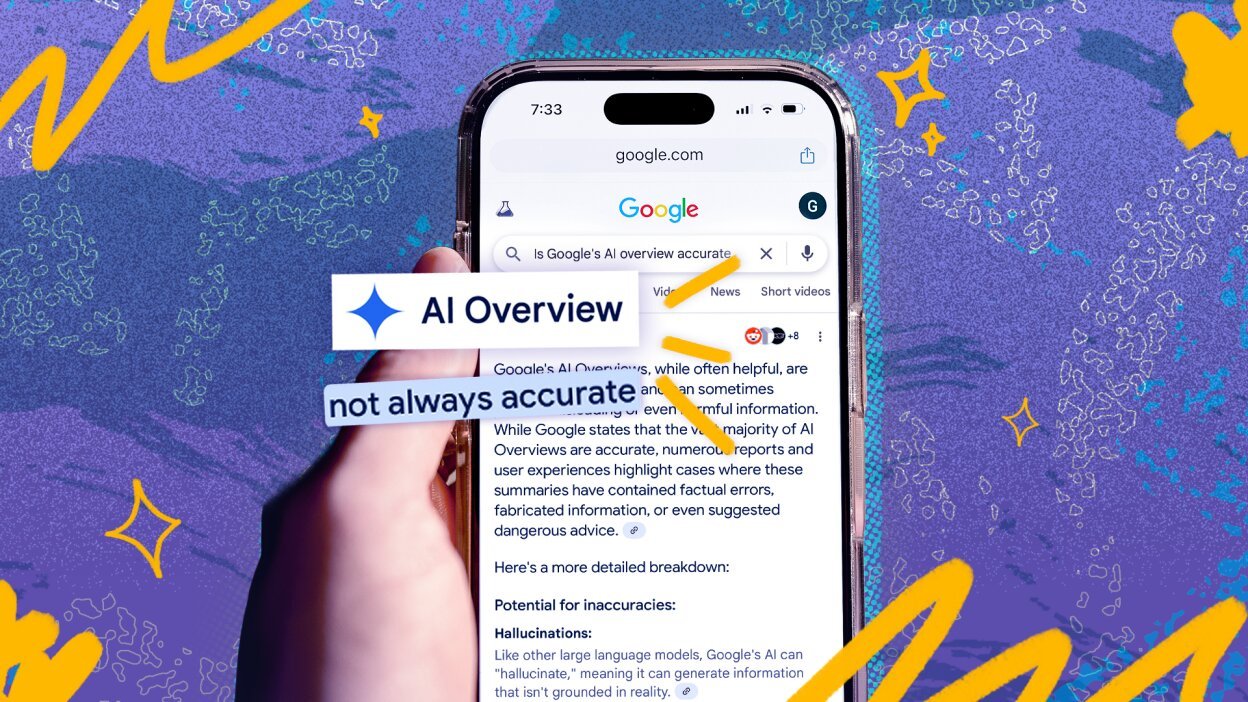

Google’s AI Overviews are delivering inaccurate or weakly supported information at a significant scale, according to an analysis that examined the performance of its Gemini models across thousands of search queries. The findings raise concerns about the reliability of AI-generated summaries that appear prominently in search results.

Accuracy gains mask deeper reliability concerns

The analysis, conducted by the AI startup Oumi at the behest of The New York Times, evaluated responses across 4,326 Google searches using a benchmark called SimpleQA. Gemini 3 produced factually sound responses 91 percent of the time, compared with 85 percent for Gemini 2, indicating overall improvement in accuracy.

However, the study found a rise in “ungrounded” answers, where cited sources did not support the claims made. Gemini 2 produced such responses 37 percent of the time, while Gemini 3 did so in 56 percent of cases. These responses make it harder for users to verify information and suggest that improvements in surface-level accuracy may be masking deeper issues in how answers are generated.

FCRF Launches Premier CISO Certification Amid Rising Demand for Cybersecurity Leadership

Google disputed the findings, with spokesperson Ned Adriance stating that the study contained “serious holes” and did not reflect real-world search behavior. The company maintains that AI Overviews are more reliable because they draw on existing search results before generating answers.

Scale of misinformation raises broader concerns

The analysis highlights the scale at which inaccurate information could be spreading. With Google processing roughly five trillion searches annually, even a small error rate translates into millions of incorrect answers every hour.

Researchers noted that users often trust AI-generated responses without verification. One report cited in the analysis found that only 8 percent of users double-check answers, while another experiment showed users continued to rely on AI even when it produced incorrect information nearly 80 percent of the time. This pattern has been described as “cognitive surrender,” where users defer judgment to automated systems.

The authoritative tone adopted by large language models can further reinforce this dynamic, as confident responses may be accepted as factual even when unsupported.

Testing methodology and internal findings

Oumi’s evaluation compared two testing phases, one using Gemini 2 and a later round using Gemini 3 after the upgrade. While external tests suggested improvement, internal findings cited in the report indicate that Gemini 3 still produced incorrect information 28 percent of the time.

The study also noted that ungrounded answers can stem from the way AI Overviews synthesize information, sometimes presenting claims that lack clear backing from cited sources. This raises questions about transparency and the ability of users to trace and verify the origins of AI-generated content.