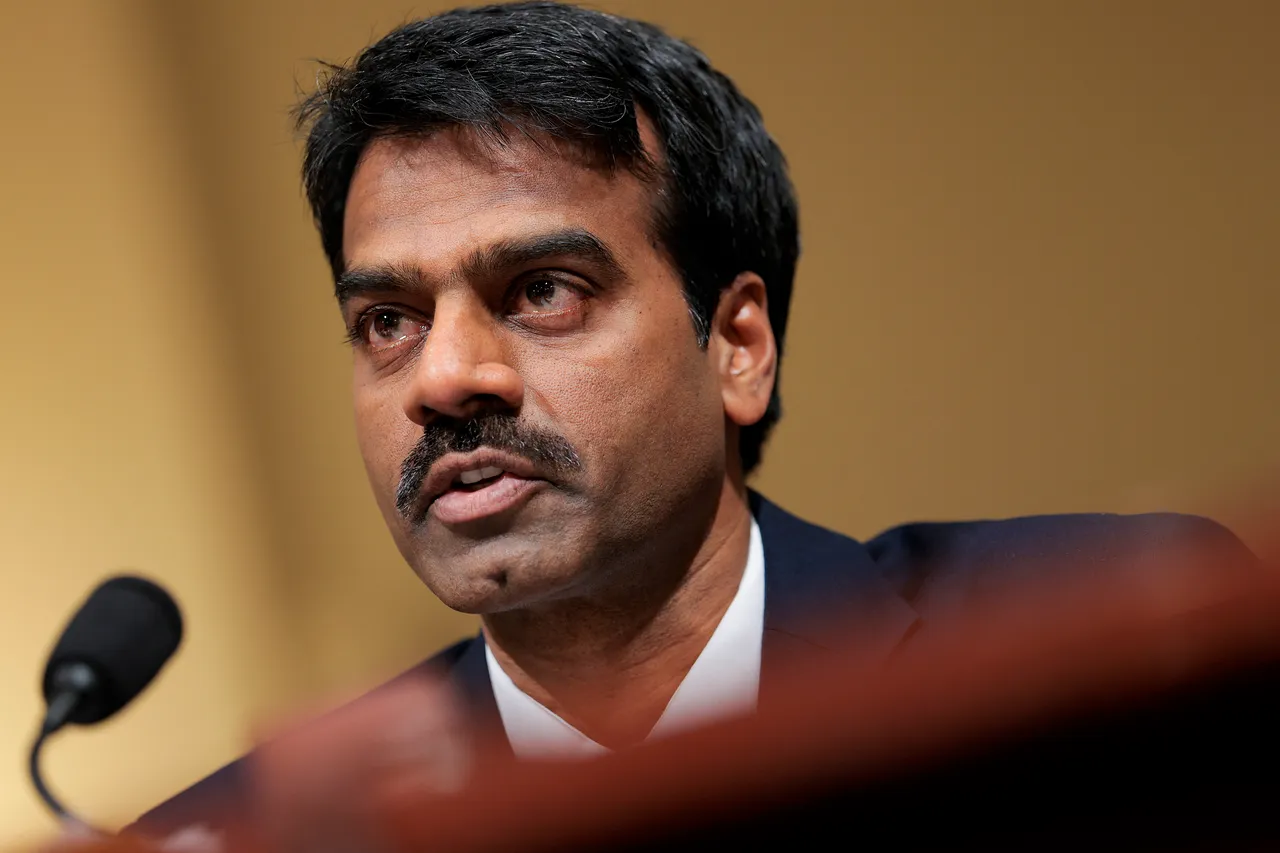

New Delhi: The acting head of the U.S. Cybersecurity and Infrastructure Security Agency (CISA) is facing serious allegations for reportedly uploading ‘For Official Use Only’ classified government documents to public AI platforms such as ChatGPT. The move is said to have bypassed established security protocols and automated alert systems, creating a potential data leak risk.

Security Alerts Ignored

Reports indicate that as the official uploaded the files, the government network’s automated security systems triggered multiple warnings. These systems are designed to prevent the theft or accidental exposure of sensitive files. Notably, the official was permitted limited use of ChatGPT, while other staff members were restricted from sharing documents on the platform.

Certified Cyber Crime Investigator Course Launched by Centre for Police Technology

High Risk of Data Exposure

Uploading internal documents to ChatGPT and other large language models (LLMs) is considered highly sensitive from a cybersecurity perspective. AI models are trained on the data users input, meaning that once sensitive information is uploaded, it could potentially appear in responses to other users’ queries. Even if the documents were ‘unclassified,’ they contained internal operational details that should not enter the public domain under confidentiality rules.

Previous Controversies and Internal Action

The official’s appointment came under the Trump administration. Earlier reports suggest that the official had failed a counterintelligence polygraph test, which was later invalidated by the Department of Homeland Security. Following the incident, six staff members under the official’s supervision were suspended from accessing classified information.

CISA’s Statement

A CISA spokesperson clarified that the official’s ChatGPT usage was brief and limited. The Department of Homeland Security is currently investigating the extent of potential damage caused by these uploads.

Warnings on Security and Privacy

Experts warn that not only government officials but also ordinary users should exercise extreme caution when uploading sensitive documents—such as bank statements, medical records, ID documents, or legal agreements—to ChatGPT or other AI chatbots. AI companies often conduct human reviews of chat histories to improve system performance, which can expose sensitive information to third parties. Additionally, once data is used to train an AI model, it could inadvertently leak in future responses to similar queries.