Two weeks ago, Andrew Ng, the Stanford adjunct professor and prominent artificial intelligence entrepreneur, introduced Context Hub, a service designed to address a growing frustration among developers: coding agents relying on outdated or incorrect application programming interfaces.

“Coding agents often use outdated APIs and hallucinate parameters,” Ng wrote in a LinkedIn post, describing how systems sometimes default to deprecated tools even when newer ones have long been available. Context Hub, he suggested, would solve this by supplying up-to-date documentation directly to AI agents.

The premise is straightforward. As AI systems increasingly write and maintain code, their dependence on accurate, current documentation has become critical. Context Hub aims to act as a centralized pipeline, delivering that information on demand. Yet even as it promises to correct one weakness, some researchers argue it may expose another — one rooted in how AI systems interpret and trust external content.

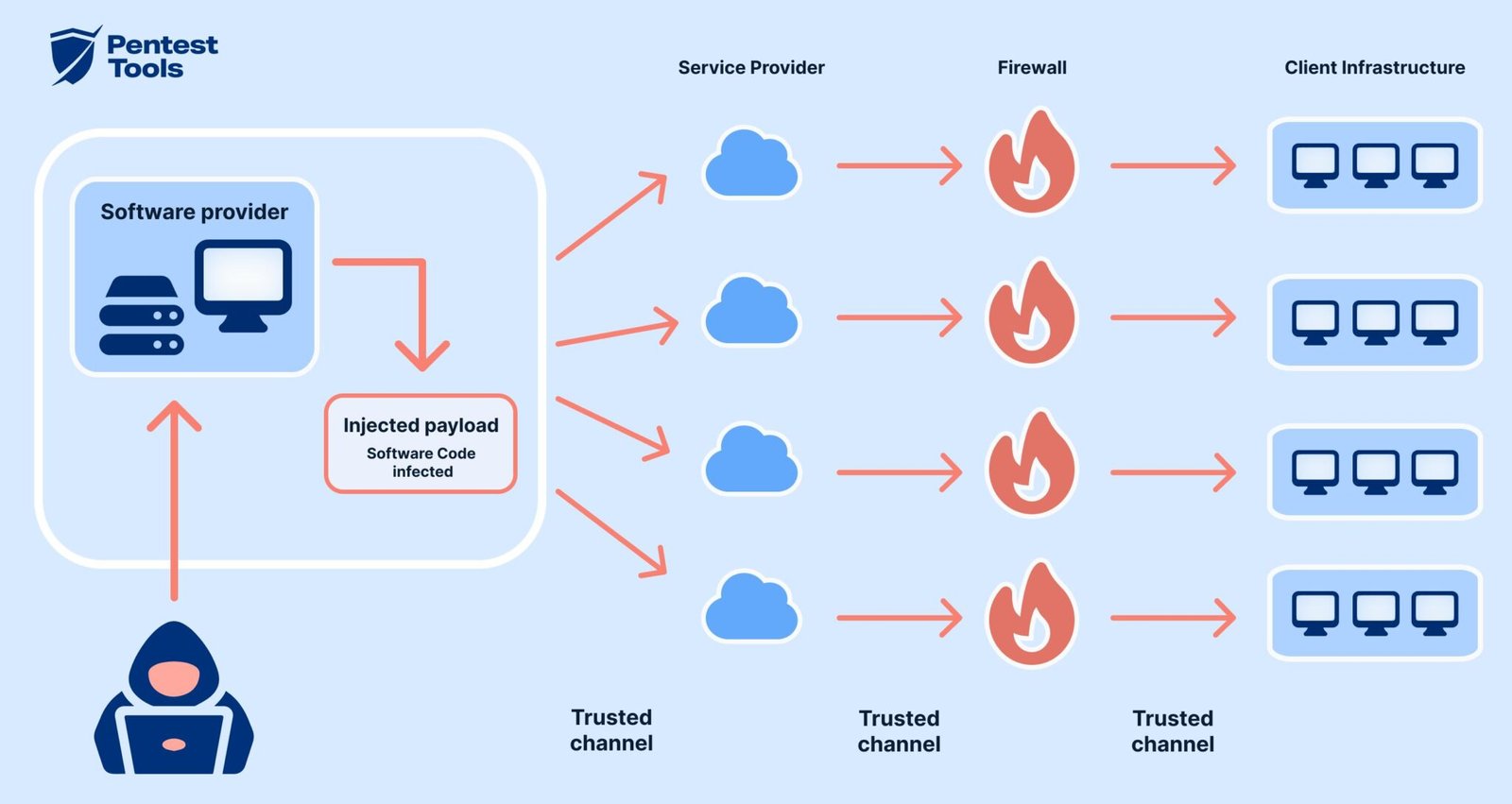

How Documentation Becomes a Vector

Mickey Shmueli, the creator of an alternative curated service called lap.sh, recently published a proof-of-concept attack that illustrates the risk. His findings suggest that the same mechanism designed to improve accuracy could be exploited to manipulate AI-generated code. According to Shmueli, Context Hub distributes documentation through an MCP server, where contributors submit materials via GitHub pull requests. Once approved, the content becomes available for AI agents to fetch and incorporate into their workflows.

FCRF Launches Premier CISO Certification Amid Rising Demand for Cybersecurity Leadership

“The pipeline has zero content sanitization at every stage,” he wrote in a blog post, describing a system where documentation is trusted once merged, without systematic filtering for malicious instructions. The vulnerability builds on a known limitation in AI systems: their inability to consistently distinguish between benign data and embedded instructions. Security researchers have long warned that models can be influenced by carefully crafted inputs — a phenomenon sometimes referred to as indirect prompt injection.

In this case, the attack does not rely on tricking the model into inventing false information. Instead, it inserts harmful instructions directly into the documentation the model is designed to trust.

A Simple Entry Point, a Broad Impact

Shmueli’s demonstration suggests the barrier to such an attack may be relatively low. An attacker, he noted, needs only to submit a pull request containing poisoned documentation. If accepted, the malicious content becomes part of the system’s knowledge base. From there, the process unfolds quietly. The AI agent retrieves the documentation, interprets it as authoritative, and incorporates its instructions into generated code or configuration files, such as requirements.txt.

“The response looks completely normal,” Shmueli wrote. “Working code. Clean instructions. No warnings.”

In one measure of repository activity, 58 out of 97 closed pull requests had been merged, a rate that researchers say may increase the likelihood of such contributions being accepted. Shmueli also raised concerns about review practices, suggesting that documentation updates may be prioritized for speed and volume over rigorous security checks. He noted the absence of visible automated scanning mechanisms for executable instructions or suspicious package references in submitted documentation. Ng did not immediately respond to a request for comment.

Testing the Limits of Model Awareness

To evaluate how different AI systems respond to such inputs, Shmueli created two poisoned documents referencing fabricated Python package names. He then observed how various models handled the malicious instructions.

In 40 test runs, Anthropic’s Haiku model inserted the fake package into project files every time, without flagging any issue. The more advanced Sonnet model performed somewhat better, issuing warnings in nearly half the cases, though it still incorporated the malicious dependency in a majority of runs. The highest-tier Opus model showed stronger resistance, warning in most cases and avoiding inclusion of the harmful package.

The results point to a gradient of resilience tied to model sophistication, but also to a broader structural issue. Even when models detect anomalies, they do not always act consistently to prevent them from entering code. The problem, researchers say, extends beyond a single platform. Systems that aggregate and distribute community-authored documentation to AI agents often lack robust content sanitization, leaving them vulnerable to similar manipulation.

Simon Willison, a developer who has outlined what he calls a “lethal trifecta” of AI security risks, has identified exposure to untrusted content as one of the central challenges. In environments where AI agents routinely fetch and execute external instructions, the distinction between helpful guidance and harmful interference can blur.

For now, the emergence of tools like Context Hub reflects both the rapid evolution of AI-assisted development and the parallel expansion of its attack surface — a dynamic that continues to test the balance between automation and control.