As demand for artificial intelligence accelerates worldwide, technology companies are racing to build the vast infrastructure required to power it. From massive data centers to electricity grids capable of sustaining them, the future of AI increasingly depends not only on algorithms and chips but also on energy, computing capacity, and the global race to supply them.

The Growing Appetite for Compute

The rapid rise of artificial intelligence has set off an unprecedented surge in demand for computing power, forcing technology companies to rethink the scale of the infrastructure required to support the next generation of AI systems.

Last December, Greg Brockman said that OpenAI plans to commit roughly $1.4 trillion toward data center projects over the next eight years. The investment, he suggested, reflects an attempt to stay ahead in an increasingly competitive race to expand computing capacity.

“We want to be ahead of the curve,” Brockman said at the time. Yet even with such ambitions, he added, the pace of technological growth may still outstrip expectations. “I don’t think we will be, no matter how ambitious we can dream of being right now.”

At the BlackRock Infrastructure Summit, Sam Altman described the broader challenge facing the industry: moving away from a world constrained by computing capacity.

Inside technology companies, compute — the processing power used to train and run AI models — has become one of the most valuable resources in the industry. Engineers increasingly compete for access to specialized graphics processing units, or GPUs, which are essential for training large-scale AI systems. Some job candidates, executives say, now ask about their prospective employer’s AI compute budget alongside questions about salary and equity.

Algoritha Security Emerges As India’s Leading Corporate Investigation Powerhouse

Infrastructure and the Energy Constraint

Powering that expansion presents a formidable challenge. Modern AI data centers can consume as much electricity as small cities, raising concerns about whether existing power grids can sustain the rapid expansion of AI infrastructure. In the United States, the strain on electricity systems — compounded by shortages of electrical transformers and the slow permitting process for transmission lines — could become a bottleneck for the industry.

The issue has drawn attention from some of the technology sector’s most prominent figures. In January, during an episode of the podcast Moonshots with Peter Diamandis, Elon Musk argued that electricity generation may now represent the primary limiting factor in scaling artificial intelligence.

Musk suggested that countries capable of expanding energy production more quickly could gain an advantage in the global AI race. He predicted that China might outpace the United States in total AI computing capacity if its energy infrastructure continues to expand at a faster rate.

The Infrastructure Sprint

Technology companies are already committing enormous resources to avoid those constraints. Executives across the sector have said that hundreds of billions of dollars will be spent this year alone on expanding the computing infrastructure needed to support AI development.

Speaking during a keynote at CES 2026, Lisa Su said the world could require more than “10 yottaflops” of computing power over the next five years — a level roughly 10,000 times larger than global AI capacity in 2022.

Meeting that demand will require a combination of advanced chips, vast networks of data centers, and the electricity needed to operate them. In this environment, computing capacity increasingly determines who gains access to the most advanced AI systems.

According to Altman, the demand for AI is only expected to rise as businesses and governments adopt the technology across sectors ranging from software development to scientific research.

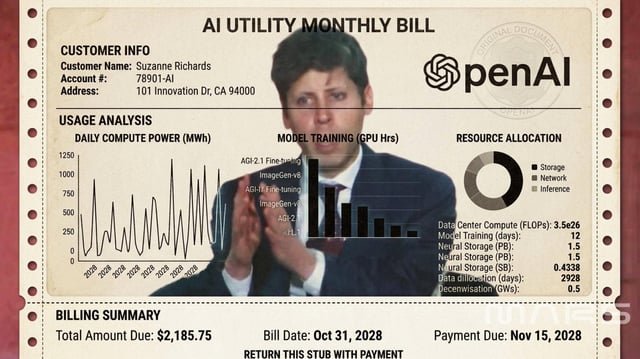

Artificial Intelligence as a Utility

The scale of these investments reflects a broader vision for how AI may ultimately be delivered. Altman has suggested that the business model for AI companies could increasingly resemble that of utilities. Rather than purchasing software outright, users may pay for access to AI capabilities in much the same way they pay for electricity or water.

“Fundamentally our business and I think the business of every other model provider is going to look like selling tokens,” Altman said, referring to the units AI systems use to process and price input and output data.

In such a model, intelligence would be delivered on demand, metered according to usage.

“We see a future where intelligence is a utility like electricity or water,” he said, “and people buy it from us on a meter and use it for whatever they want to use it for.”

If that vision materializes, the infrastructure race now underway — involving data centers, power grids, and advanced computing hardware — may determine how widely that utility can ultimately be distributed.

In that future, artificial intelligence might not only transform industries. It could also arrive as another line item on a monthly utility bill.