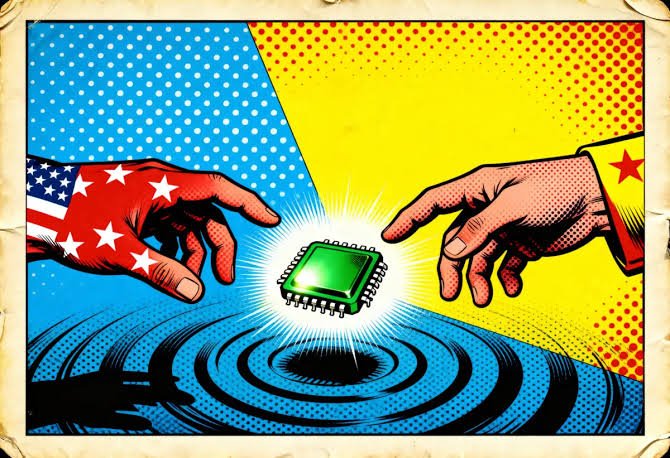

New Delhi: Competition and disputes over intellectual property in the global artificial intelligence sector are intensifying as US-based AI company Anthropic has accused three Chinese AI firms of attempting to illegally obtain its model technology. The company claims that around 24,000 fake accounts were used to carry out what it described as a “distillation attack” to extract proprietary capabilities.

Allegations of an Industrial-Scale Campaign

According to Anthropic, three AI laboratories — DeepSeek, Moonshot, and MiniMax — are suspected of conducting industrial-scale campaigns. The company alleged that these organizations attempted to extract capabilities from its Claude AI system to improve their own models. A blog post claimed that more than 16 million interactions were carried out through fake accounts in violation of service terms and regional access restrictions.

The issue is also being viewed through a national security lens. The company warned that such cyber campaigns are not limited to any single organization but could create broader strategic risks and further intensify global AI competition.

FCRF Launches Flagship Certified Fraud Investigator (CFI) Program

Earlier in January 2026, OpenAI also accused DeepSeek of engaging in similar distillation attacks, alleging attempts to steal internet data and model outputs. However, reactions to these claims have been mixed. Some experts argue that AI companies themselves have justified training models on publicly available or copyrighted data, which has fueled ethical and legal debates.

Former US President Donald Trump stated during an AI event in 2025 that knowledge acquisition should not be treated as direct copyright infringement, arguing that learning from reading books or articles does not necessarily require payment for information. Nevertheless, disagreements within the AI industry continue over intellectual property rights.

What Is a Distillation Attack?

A distillation attack is a technique in which researchers repeatedly send similar or modified prompts to understand the behaviour of a large language model. While this method is also used legitimately to develop smaller and more cost-efficient models, it can be misused to reverse-engineer competitive technologies.

Anthropic stated that such campaigns attempt to obtain advanced AI capabilities in a shorter time and at lower cost, which could create imbalance in global AI competition. However, the company also acknowledged that it is not yet fully clear whether the alleged activities violate international law.

A Growing Industry Dispute

Experts believe that such incidents could pose a major challenge to the industry amid growing investments in the AI sector. Companies such as Anthropic, OpenAI, Meta, and xAI are currently spending billions of dollars on AI infrastructure, data centres, and research and development. If rival companies succeed in acquiring advanced model technology at lower cost, it could affect the competitive advantage of US-based AI firms.

The company has urged the AI industry, governments, and other stakeholders to strengthen security mechanisms. The blog post emphasized that cyber and technological campaigns are becoming increasingly sophisticated, requiring coordinated global action to counter emerging threats.

National Security and Strategic Competition

Anthropic has so far suspended the alleged accounts involved in the activity while investigation and technical analysis continue. The company said it will release further information if any significant developments occur in the case.