New Delhi | January 3, 2026: Veteran writer and lyricist Javed Akhtar has strongly condemned an AI-generated fake video circulating on social media, describing it as “rubbish” and indicating that he is seriously considering legal action against those responsible for creating and spreading the clip.

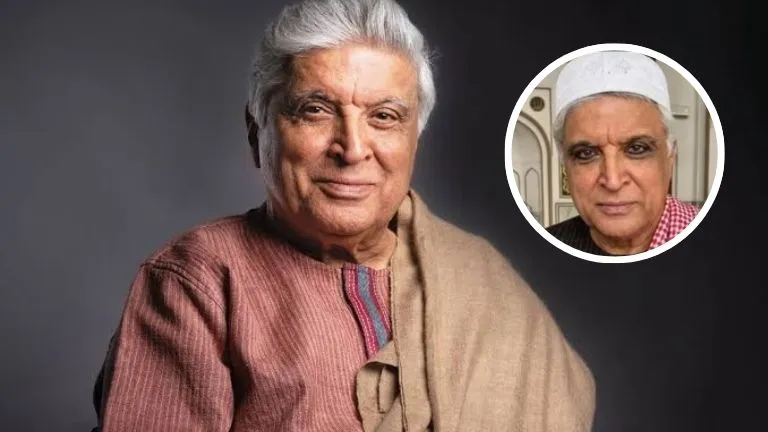

The viral video features a computer-generated image of Akhtar shown wearing a topi and falsely claims that he has “ultimately turned to God.” The lyricist categorically denied the assertion, saying the clip is entirely fabricated and intended to mislead viewers.

“A fake video is in circulation showing my fake computer-generated picture with a topi on my head, claiming that ultimately I have turned to God. It is rubbish,” Akhtar said in a note posted on his social media account.

‘Fake news damages reputation and credibility’

Posting on X (formerly Twitter), Akhtar said such content goes beyond routine misinformation and directly harms a person’s reputation and credibility, especially when it is made to appear authentic using artificial intelligence tools.

“These kinds of fake videos are extremely dangerous. They damage reputation and credibility, and I am seriously considering reporting the person responsible,” he wrote, underscoring the need for accountability in the digital ecosystem.

Akhtar’s comments come amid growing concern over the misuse of generative AI to produce deepfake videos that falsely attribute statements, beliefs, or actions to public figures. Media experts warn that such content can spread rapidly across platforms, often outpacing fact-checks and official clarifications.

Rising alarm over AI-generated misinformation

The spread of AI-generated fake content has emerged as a major challenge for governments, technology platforms, and law enforcement agencies in India and globally. In recent months, authorities have flagged a rise in deepfake videos targeting politicians, celebrities, and private individuals alike.

Several cases involving AI-morphed videos of public personalities are already under investigation, with officials stressing that existing laws on impersonation, defamation, and misinformation apply equally to AI-generated material.

Akhtar’s decision to publicly call out the video adds to a growing chorus of prominent voices demanding stricter safeguards and clearer accountability for the misuse of artificial intelligence.

A consistent voice against irrationality

Known for his outspoken views on rationalism, free thought, and social issues, Akhtar has often found himself at the centre of ideological debates. Supporters argue that the fake video appears designed to misrepresent his long-held public positions and provoke controversy.

Observers note that AI-driven misinformation is increasingly being used to exploit religious, political, and cultural sensitivities, making such content particularly potent—and potentially divisive—in a diverse society like India.

Calls for stronger regulation and platform responsibility

Technology and media experts say the episode highlights the urgent need for clear regulatory frameworks governing generative AI tools, alongside faster and more consistent response mechanisms from social media platforms.

While platforms have announced policies to curb deepfakes, enforcement remains uneven, and victims often struggle to secure swift takedowns.

“Once a deepfake goes viral, the damage is already done,” said a digital media analyst. “Public figures speaking out helps underline the seriousness of the threat and the need for stronger deterrence.”

Next steps

While Akhtar has not confirmed whether a formal complaint has been filed, his statement signals a likely legal course of action. Legal experts say pursuing such cases can set important precedents and deter future misuse.